Schools and higher education have been forecasting the emergence and impact of artificial intelligence on education topics for several years (Horizon Report, 2017). Many of these forecasts have focused on learning aids and library search advancements. Recently a hot topic of discussion has been the responsible use of data and the ethics of using AI in educational institutions. There are security, ethics, and privacy concerns. IT security was named as the number one IT issue in 2016. These subjects are currently in debate and the line between enhancing educational opportunities and creating dangerous vulnerabilities is being examined. What data should be available to researchers? What data should AI have access to? What level of transparency in AI algorithms should there be? What guidelines should they follow? What is the appropriate level of security is needed to protect student data and locations? Given the possible advent of dispersed learning, these questions are more salient than ever, and we must be careful not to deprecate the education industry’s progress in technology due to overblown fears.

Educause has labelled these concerns with AI as “Danger” and suggests that academia may decide that artificial intelligence and its associated problems are too much to bear (Alexander, 2019). Some of the innovation forces that are moving this discussion and are legal, societal, geopolitical, security, and economic factors. Legally, the challenges have been accepted and mitigated with sound contracting and “legalese” but some innovation is hampered by this. In academia, there are some that are calling for an outright end to most AI platforms due to the danger that it will be used to invade privacy or disenfranchise certain groups (Zuboff, 2019). There are many organizations converging to offer inputs and solutions for ethical machine learning and artificial intelligence. Microsoft, IBM, and Google are just a couple of the examples of sponsors and these watch dog agencies will no doubt increase in scope and power as the government gets involved. Economically, many are pushing for artificial intelligence to capitalize on its ability to stream-line management, enhance data and learning access, aid learning, and tailor learning environments. Its true that data science has already improved the speed and ability to teach, learn, and manage education.

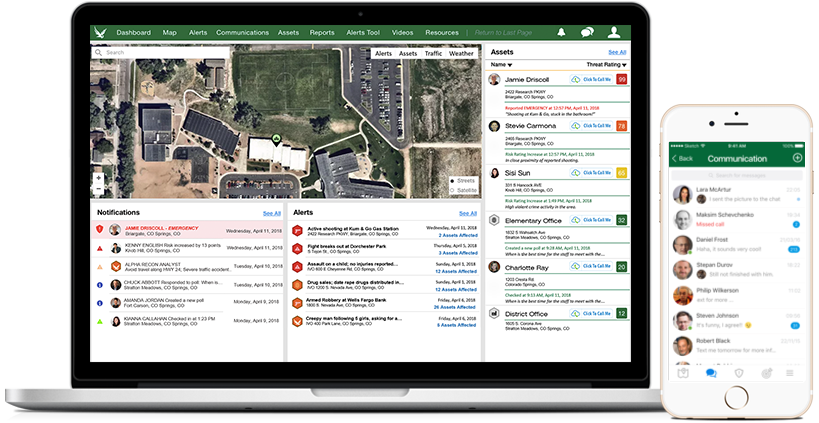

The security “danger” issues span cyber, child endangerment, geopolitical pressures, and data privacy. Home learning and the move to dispersed systems has amplified the vulnerabilities and attack surface. Risk management has become more complex in education and the ability for bad actors to use information or target students and staff is increasing. Thankfully, there are many technology companies such as IBM, that are helping to secure access, data, and cyber assets, in many cases using AI to do it. However, the danger of AI technology can involve international threats and intellectual property theft and espionage. Just recently arrests were made in universities with spies representing themselves as students in laboratories. With data technology becoming state sponsored and widely available, education institutions must now consider the source of the technology. Data access and protection with respect to intelligent risk or performance systems is also under rigorous review with the value of early warning of failure or threats being weighted against the privacy of students. The United States is lagging other countries with respect to personal data and privacy rights, but this has its advantages as well.

In summary, the current trends in using ML/AI in the education industry show a cautious optimism and isolate several areas where this growing technology can yield great benefit across management, operations, tutoring, data mining, and security. As long as there is transparency in the way AI is used, and the necessary steps are taken to protect against unfairness or security breaches, AI should be seen as a tool that can continue to develop the education industry and teaching capacity. We are not close to arriving at general artificial intelligence, where machines can learn on their own, but in the next 5 years, lives will be saved, advanced education will be delivered more successfully, and budgets will be saved by efficiencies generated by AI. Ethics and the other “Dangers” will need to be constantly assessed, but this is a small price to pay to enhance the education of citizens around the world.

References

Zuboff, S (2019). The Age of Surveillance Capitalism: The Fight for a Human Future at the New Frontier of Power (New York: PublicAffairs, Hachette Book Group.

Alexander, B, (2019). https://er.educause.edu/articles/2019/10/5-ais-in-search-of-a-campus